Landing a job that involves Kubernetes can feel like a challenging job, especially with the technical depth the subject demands. But don’t worry, you’re about to begin on a journey that’ll equip you with the right questions and answers to impress your future employer. Whether you’re a seasoned pro or just dipping your toes into the world of container orchestration, understanding the ins and outs of Kubernetes is key.

Kubernetes Interview Questions: A Comprehensive Guide

When diving into the competitive field of cloud computing, specifically roles involving Kubernetes, being prepared for challenging interview questions is crucial. This guide aims to arm you with insights and answers to some of the most common yet intricate questions you might face. Whether you’re a seasoned professional or just starting, understanding these questions can significantly boost your confidence.

Why Kubernetes? Employers are looking to gauge your understanding of Kubernetes’ benefits within their infrastructure. It’s not just about acknowledging its popularity but understanding its efficiency in automating deployment, scaling, and management of containerized applications. Highlight its ability to provide a platform for managing containerized workloads and services with declarative configuration and automation as a key advantage.

Explain Pods in Kubernetes. Pods are the smallest, most basic deployable objects in Kubernetes. They represent a single instance of a running process in your cluster. Pods contain one or more containers, such as Docker containers. When explaining pods, stress their significance in the Kubernetes ecosystem for running applications and their role in managing the containers’ networking and storage.

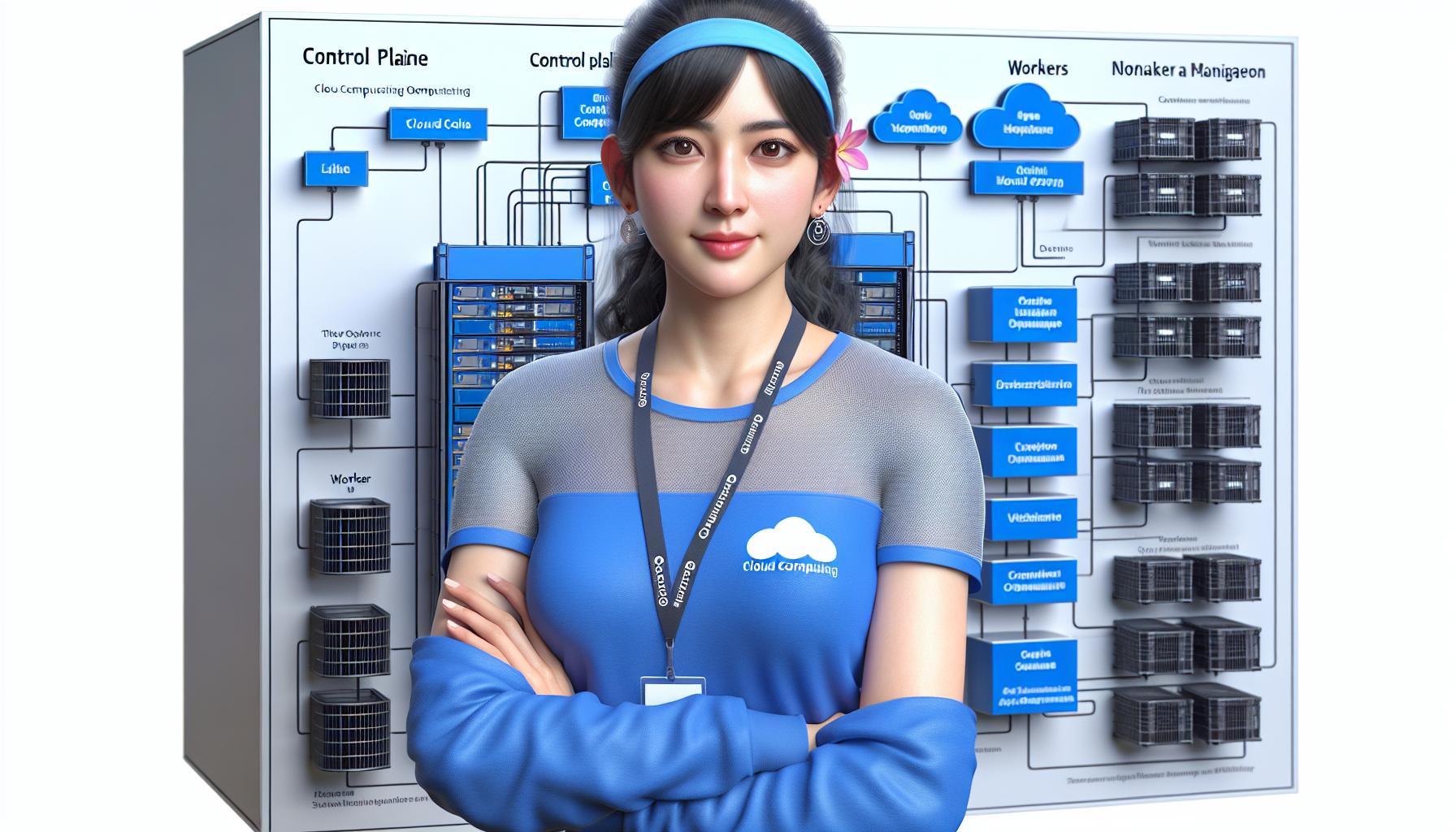

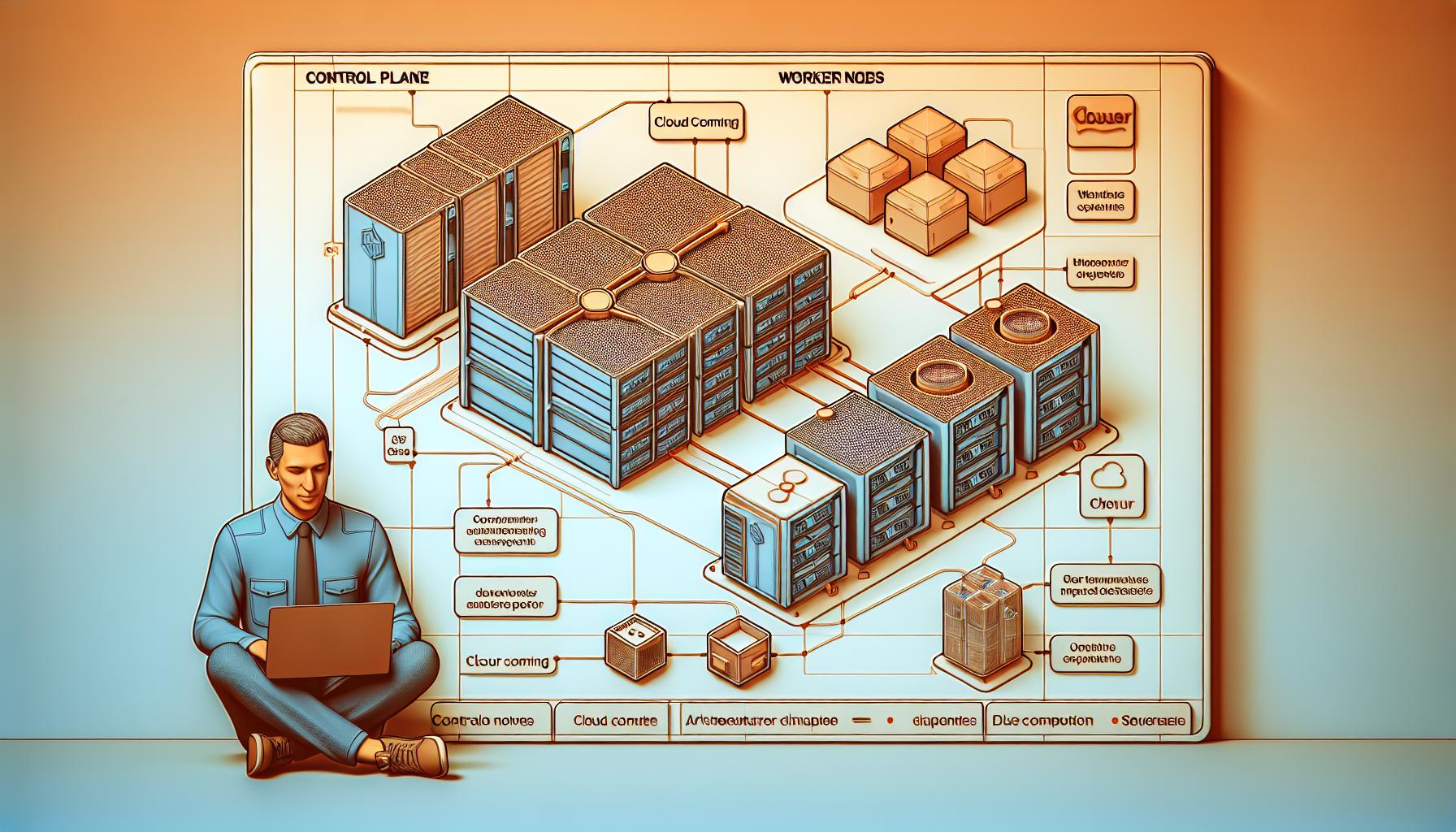

What are the main components of Kubernetes architecture? Your interviewer might be interested in your understanding of the Kubernetes’ architecture. Break down the architecture into two main components: the Control Plane and Worker Nodes. Here’s a simple breakdown:

| Component | Function |

|---|---|

| Control Plane | Manages the cluster and responds to cluster events (e.g., scheduling pods) |

| Worker Nodes | Where the applications actually run. Managed by the Control Plane and host the pods |

Highlighting your knowledge of these components showcases your grasp of Kubernetes’ operational environment.

Discuss Services and Ingress in Kubernetes. Services in Kubernetes are essential for describing how applications are accessed by other parts of the cluster or the outside world. Ingress, on the other hand, is a vital component for managing external access to the services in a cluster, often implementing HTTP(S) route handling. You might want to refer to the Kubernetes official documentation to give a comprehensive explanation.

Section 1: Introduction to Kubernetes

When you’re diving into the world of cloud computing and container orchestration, Kubernetes stands out as a pivotal technology that keeps popping up. If you’re prepping for a role that requires a deep understanding of Kubernetes, it’s essential to grasp its fundamentals first. Kubernetes, often abbreviated as K8s, orchestrates your containerized applications, ensuring they run smoothly and efficiently across a cluster of machines without a hiccup.

Developed by Google and now maintained by the Cloud Native Computing Foundation (CNCF), Kubernetes has revolutionized how applications are deployed, scaled, and managed in cloud environments. For an authoritative overview of Kubernetes, visiting the official Kubernetes documentation provides a wealth of information directly from the source.

At its core, Kubernetes automates the distribution and scheduling of application containers across a cluster in a more efficient manner. This automation not only eliminates many manual processes involved in deploying and scaling applications but also ensures that the state of your applications matches the desired state you’ve defined. Understanding the architecture of Kubernetes is vital, encompassing both the Control Plane and Worker Nodes, each serving a unique purpose in managing the life cycle of applications.

Here’s a quick rundown of their roles:

| Component | Purpose |

|---|---|

| Control Plane | Manages the state of the Kubernetes cluster, including scheduling applications and maintaining their desired state. |

| Worker Nodes | Host the actual applications running in containers, managed by the Control Plane. |

Kubernetes also introduces Pods, the smallest deployable units that can be created, scheduled, and managed. It’s crucial to understand that in the Kubernetes ecosystem, a Pod represents a single instance of a running process in your cluster. For more insights into how Pods help application deployment and management, the Kubernetes Pods documentation is an excellent resource.

As you gear up for Kubernetes-related interviews, familiarizing yourself with its key components and their interplays is indispensable. Not only will it boost your confidence, but it’ll also give you a solid foundation to tackle more advanced questions that investigate into specifics, such as networking, storage, and Pods lifecycle management in Kubernetes. Remember, clear and direct answers that reflect a deep understanding of Kubernetes architecture will distinguish you in your interview.

Section 2: Basic Concepts and Architecture

When you’re gearing up for Kubernetes-related interviews, understanding its basic concepts and architecture is pivotal. Let’s dive deeper into the core elements that make up Kubernetes, ensuring you’re well-prepared to tackle any question that comes your way.

At its heart, Kubernetes is a powerful system designed for automating the deployment, scaling, and management of containerized applications. It groups containers that make up an application into logical units for easy management and discovery. Here, we’ll break down the primary components and their roles within the Kubernetes ecosystem.

Kubernetes Cluster Architecture

A Kubernetes cluster consists of at least one Control Plane and multiple Worker Nodes. Each has distinct responsibilities but they work together seamlessly to manage the state of your applications.

- Control Plane: The brain of the cluster, managing the cluster’s state and configurations. Key components include the API Server, Scheduler, Controller Manager, and etcd storage. The Control Plane decides what runs on all of the cluster’s Nodes, schedules workloads, and manages workloads’ desired states.

- Worker Nodes: These machines actually run your applications. Each node has a Kubelet, an agent for managing the node and communicating with the Kubernetes Control Plane. Nodes also have tools like Docker and runc to run containers, and a kube-proxy to handle networking.

Here’s a simplified representation:

| Component | Role |

|---|---|

| Control Plane | Manages the cluster’s state, including scheduling and responding to cluster events |

| Worker Node | Runs the actual applications and workloads |

| API Server | Provides the communication hub for cluster operational data |

| etcd | Stores all cluster data, acting as the single source of truth for the entire cluster |

| Scheduler | Decides which node can best run a new container based on resources and other policies |

| Controller Manager | Oversees a number of smaller controllers that perform actions like replicating pods and handling node operations |

| Kubelet | Manages the state of each node, ensuring that containers are running as expected |

| kube-proxy | Handles Kubernetes networking, allowing containers to communicate both internally and externally |

Section 3: Deploying and Managing Applications on Kubernetes

When you’re diving into Kubernetes, one of the critical skills you’ll need to master is deploying and managing applications. This is a cornerstone of Kubernetes operations, and interviewers are keen to assess your hands-on experience and understanding in this area.

First off, deployment in Kubernetes is more than just launching containers. It involves defining your application within configuration files, usually written in YAML or JSON format. This sets the stage for Kubernetes to understand what your application requires to run successfully, including which images to use, the number of replicas, network settings, and more. Familiarizing yourself with the structure and semantics of these configuration files is paramount. For an in-depth guide on writing Kubernetes configuration files, the Kubernetes documentation is an excellent resource.

Once your application is defined, deploying it is as simple as running a kubectl command, such as kubectl apply -f my-application.yaml. This tells Kubernetes to read your configuration and instantiate the application as described. Monitoring the deployment’s progress and managing its lifecycle through commands like kubectl get deployments and kubectl rollout status are skills you’ll be expected to demonstrate.

Managing applications also requires an understanding of services and networking within Kubernetes. Services in Kubernetes allow your applications to communicate with each other and with the outside world. Getting to grips with the different types of services (ClusterIP, NodePort, LoadBalancer) and when to use them is crucial. For a detailed explanation, visiting the Kubernetes Services documentation can clear up any confusion.

Also, scaling applications is another vital concept. Kubernetes simplifies this process, allowing you to adjust the number of pods to meet demand. The command kubectl scale deployment/my-application --replicas=3 changes the number of replicas in your deployment, showcasing Kubernetes’ ability to easily manage application scaling.

Finally, updating and rolling back applications is a regular task in application lifecycle management. Kubernetes supports several strategies for updating applications, including rolling updates and blue-green deployments. Understanding how to perform updates without downtime and how to rollback if something goes wrong are skills that can set you apart in Kubernetes interviews.

| Action | Command |

|---|---|

| Deploy an application |

Section 4: Scaling and Monitoring in Kubernetes

Scaling and monitoring are two pivotal areas within Kubernetes that ensure your applications are not just running, but also meeting the demands gracefully as they grow. Grasping these concepts can significantly enhance your performance in Kubernetes interviews.

Horizontal Pod Autoscaling (HPA) and Vertical Pod Autoscaling (VPA) are two methods Kubernetes uses to scale applications based on demand. HPA increases or decreases the number of pod replicas, while VPA adjusts the CPU and memory limits of the pods. Understanding when to use HPA or VPA is critical. For instance, HPA is ideal for stateless applications like web servers, where scaling out is beneficial. On the other hand, VPA suits stateful applications or databases that require more resources.

To configure HPA, you’d typically use a command like:

kubectl autoscale deployment <deployment_name> --cpu-percent=50 --min=1 --max=10

This command tells Kubernetes to ensure the CPU usage across all pods doesn’t exceed 50% by adjusting the number of replicas between 1 and 10.

Monitoring in Kubernetes goes hand in hand with scaling. Tools like Prometheus and Grafana are often used together for this purpose. Prometheus collects and stores metrics as time series data, while Grafana provides the visualization layer for those metrics. Integrating these tools with Kubernetes allows you to monitor applications’ performance in real-time.

Here’s a brief overview of what metrics you might monitor:

- CPU and memory usage

- Number of running, pending, and failed pods

- Network IO

- Disk IO

These metrics provide insights into your application’s health and performance, guiding scaling decisions to ensure optimal operation.

For more comprehensive monitoring and visualization capabilities, you might explore Kubernetes Metrics Server and the integration with Grafana through its detailed documentation available on the official Grafana website.

Understanding how to scale and monitor applications in a Kubernetes environment is essential. It’s not just about keeping your applications running; it’s about ensuring they’re performing optimally under varying loads and spotting issues before they affect your service. With knowledge in these areas, you’re better positioned to discuss Kubernetes’ practical aspects confidently in any interview setting.

Section 5: Troubleshooting and Debugging in Kubernetes

When you’re diving into Kubernetes, understanding how to troubleshoot and debug issues within your clusters is essential. This skill not only helps in resolving application-related problems quickly but also ensures the smooth operation of your services. Let’s explore some key strategies and tools that are vital for efficient troubleshooting and debugging in a Kubernetes environment.

First off, you should familiarize yourself with kubectl, Kubernetes’ command-line tool, which is indispensable for investigating issues. Kubectl commands enable you to inspect running Pods, view logs, and execute commands within containers, providing immediate insights into your applications’ behavior. For example, kubectl get pods helps you list all Pods in your namespace, showing their current status, which is a good starting point for troubleshooting.

Another crucial aspect is understanding logs. Logs are your first go-to resource for troubleshooting. Kubernetes makes log management straightforward, as you can use kubectl logs <pod-name> to quickly retrieve the logs of any Pod. Sometimes, you might need to dive deeper and access logs from specific containers within a Pod. In such cases, the command kubectl logs <pod-name> -c <container-name> becomes handy.

In situations where you need real-time investigation, kubectl exec plays a vital role. This command lets you execute commands directly inside your container, offering a hands-on approach to troubleshooting. For instance, running kubectl exec -it <pod-name> -- /bin/bash grants you a shell inside the Pod, allowing you to execute commands as if you were sitting right inside the container.

Monitoring and metrics are also pivotal in diagnosing issues. Tools like Prometheus and Grafana are widely used within the Kubernetes ecosystem for monitoring container metrics and visualizing them, respectively. They can help you identify patterns or anomalies in your system’s behavior over time, guiding you towards the root cause of problems.

To solidify your understanding, here’s a practical scenario: Let’s say you’ve deployed a new version of your application, and users are experiencing delays. By examining the logs (kubectl logs <pod-name>) and metrics via Prometheus and Grafana, you discover that the latency spike correlates with a recent deployment. This insight can guide your troubleshooting efforts, possibly leading you to roll back the deployment or investigate changes made in the latest version.

Conclusion

Arming yourself with a deep understanding of Kubernetes is key to acing your next job interview in this field. From grasping the basics of its architecture to mastering deployment and troubleshooting techniques, you’ve now got a comprehensive toolkit at your disposal. Remember the importance of practical scenarios to illustrate your problem-solving skills. Use tools like Prometheus and Grafana to showcase your ability to monitor and optimize containerized applications effectively. With this knowledge, you’re well-prepared to tackle even the most challenging questions and stand out as a knowledgeable candidate in your Kubernetes interviews. Keep learning and practicing, and you’ll be on your way to securing that coveted role.