Landing a job that involves Azure Data Factory means you’ll need to be on top of your game, especially when it comes to the interview. Whether you’re a seasoned pro or just diving into the world of data integration and ETL processes, knowing the right answers can set you apart from the competition.

Azure Data Factory Interview Questions

When you’re preparing for an interview that focuses on Azure Data Factory (ADF), it’s crucial to familiarize yourself with a variety of questions that can be asked. This section delves into some of the commonly asked interview questions that can help you stand out in your Azure Data Factory job interview.

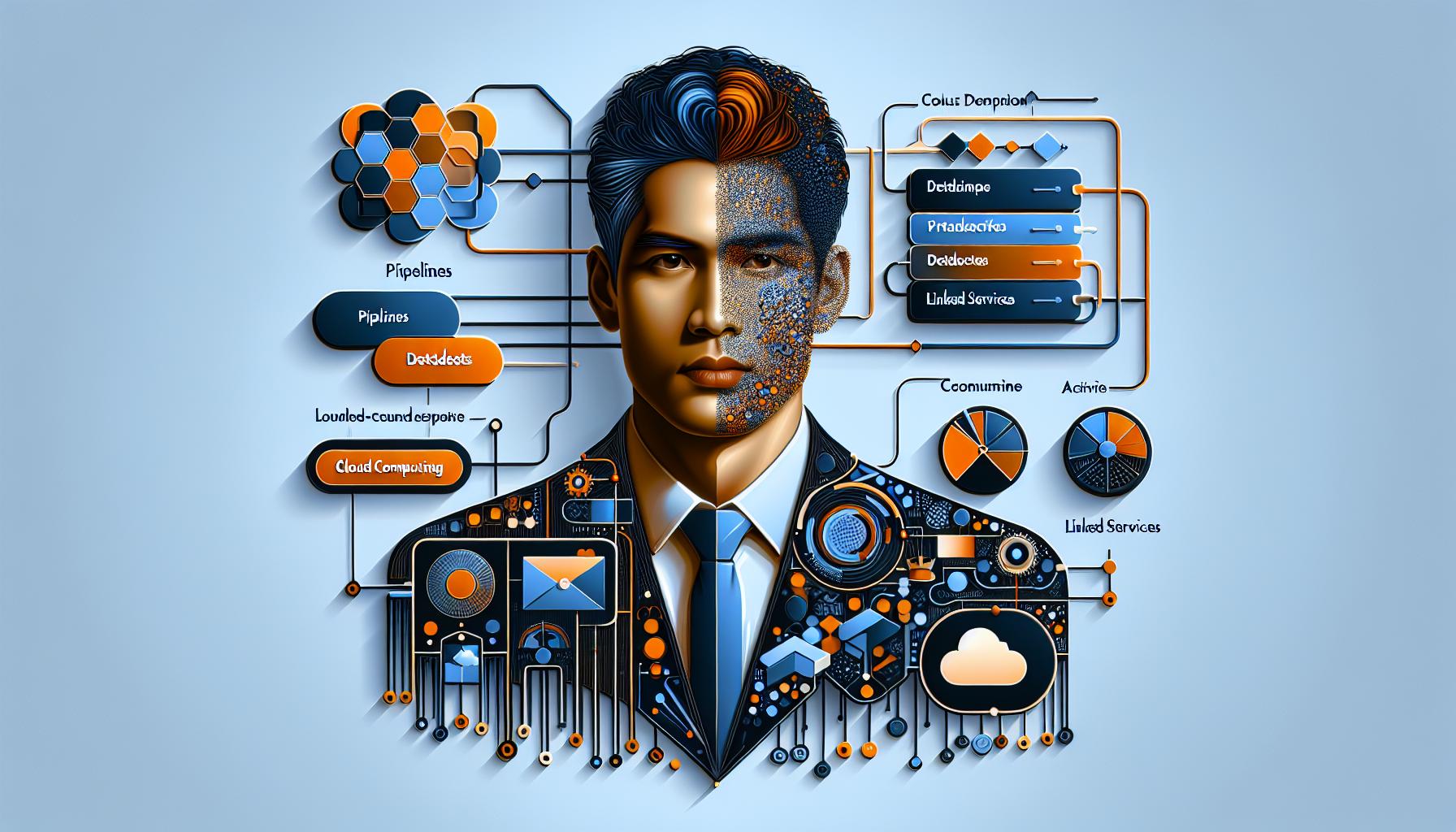

Understanding Azure Data Factory

First off, let’s set the stage with what ADF actually is. Azure Data Factory is a cloud-based data integration service that allows you to create, schedule, and orchestrate your data integration workflows at scale. When diving into interview questions, expect to discuss the components and architecture of Azure Data Factory. You might be asked to explain how ADF works, its key components like pipelines, datasets, linked services, and activities. A solid grasp of these terms and their functions within ADF is fundamental.

Here’s a quick rundown of some core components you should be familiar with:

- Pipelines: Set of activities executed collectively for a specific purpose.

- Datasets: Representations of data structures within the storage resources.

- Linked Services: Connection strings that link your ADF to external resources.

- Activities: Processing steps in a pipeline such as data movement, data transformation, or control activities.

Practical Scenarios and Problem-Solving

Be ready to tackle scenario-based questions that assess your problem-solving skills and your ability to apply ADF components in real-life situations. You might be given a scenario where you need to transfer data from different sources into a data warehouse efficiently using Azure Data Factory. Your response should illustrate your understanding of data movement and transformation processes within ADF.

Advanced Features and Optimization Techniques

To really shine in your interview, ensure you’re also acquainted with advanced features such as mapping data flows, which is a feature of ADF that provides a visually oriented design environment for data transformations. Discussing how you’ve optimized data pipelines for performance in past projects could noticeably bolster your candidacy. Optimization might involve strategies such as partitioning data to speed up transfer or employing integration runtime for data movement.

1. What is Azure Data Factory?

When diving into the world of cloud computing, you’ll quickly encounter Azure Data Factory (ADF), a pivotal service offering from Microsoft. Understanding ADF is vital, especially if you’re aiming to secure a job in cloud computing or data engineering. As you gear up for your interview, you’ll want to confidently articulate what Azure Data Factory is and how it functions.

Azure Data Factory is essentially Microsoft’s cloud-based data integration service, enabling you to create, schedule, and orchestrate data workflows. With ADF, you can seamlessly move and transform data across various data stores, whether they reside in the cloud or on-premises. Its capabilities make it a cornerstone for data-driven projects, ranging from simple data movement to complex ETL processes.

At its core, ADF provides a comprehensive suite of services, including:

- Data Movement: ADF lets you transfer data efficiently between supported data stores.

- Data Transformation: Use Azure’s compute services, such as HDInsight Hadoop, Spark, Azure Data Lake Analytics, and Azure Machine Learning, to process and transform your data.

- Workflow Orchestration: Create, schedule, and monitor data pipelines, ensuring your data workflows are executed reliably and efficiently.

ADF operates through four key components:

- Pipelines: The building blocks for data integration workflows.

- Datasets: Representations of data structures within the storage.

- Linked Services: The connection strings that link your services to the data sources.

- Activities: The tasks performed within the pipelines.

For a deeper understanding of how Azure Data Factory operates, Microsoft’s official documentation provides a wealth of knowledge and is an excellent starting point. Also, exploring detailed use cases and tutorials on sites like Microsoft Learn can further cement your understanding of ADF’s capabilities and practical applications.

Grasping the intricacies of Azure Data Factory not only prepares you for potential interview questions but also equips you with the knowledge to apply ADF’s powerful features in real-world data scenarios. Remember, demonstrating a comprehensive understanding of ADF’s components, architecture, and potential use cases will set you apart in your job interview.

2. How does Azure Data Factory differ from SSIS?

Understanding the differences between Azure Data Factory (ADF) and SQL Server Integration Services (SSIS) is crucial for anyone stepping into the area of data integration and ETL (Extract, Transform, Load) processes. While both are powerful tools used for data movement and transformation, they serve different needs and operate within distinct environments.

First and foremost, ADF is a cloud-based data integration service that allows you to create, schedule, and orchestrate data workflows in the Azure environment. It’s designed to help the movement and transformation of data across various data stores, both on-premises and in the cloud. ADF supports a wide range of data sources and provides a highly scalable environment for managing complex data processing workloads.

On the other hand, SSIS is a component of SQL Server, a relational database management system, that provides a rich set of tools for building complex on-premises ETL solutions. SSIS is tailored for data warehousing applications and is commonly used for data integration and transformation tasks within the SQL Server ecosystem.

Here’s a quick comparison to highlight key differences:

| Feature | Azure Data Factory | SSIS |

|---|---|---|

| Deployment | Cloud-based | On-premises/Cloud (with Azure VM) |

| Scalability | Automatically managed | Manually configured |

| Managed Service | Yes | No |

| Data Source Support | Extensive, including cloud-native sources | Limited, primarily on-premises sources |

| Pricing | Pay-as-you-go | License-based |

ADF shines in environments that require seamless integration with other Azure services, such as Azure HDInsight for big data processing or Azure Machine Learning for advanced analytics projects. Its ability to automate the movement and transformation of large volumes of data across different cloud services makes it an essential tool for cloud-based data pipelines.

SSIS, while more limited in terms of scalability and cloud integration, excels in on-premises data transformation tasks and is deeply integrated with SQL Server. It’s the go-to tool for SQL Server administrators and developers for building high-performance data integration solutions.

3. What are the main components of Azure Data Factory?

Azure Data Factory (ADF) serves as the backbone for data movement and orchestration in the cloud. Understanding its components is crucial for anyone looking to leverage ADF effectively. Here’s a breakdown of the main elements you’ll need to be familiar with.

Pipelines

Think of pipelines as the high-level containers orchestrating your data movement and transformation tasks. They enable you to manage the activities in a logical sequence. Each pipeline can consist of one or multiple activities, which can be executed sequentially or in parallel, depending on your workflow needs.

Datasets

Datasets represent the structure of your data within Azure Data Factory. They define the format and location of the data, serving as inputs and outputs for the activities in your pipelines. Whether your data resides in Azure Blob Storage, Azure SQL Database, or any other supported data store, you’ll define a dataset for each source and destination in your data flow.

Linked Services

Linked services are essentially the connection strings that provide ADF with information on how to connect to external resources. You can think of them as the credentials and connection details your pipelines need to access data sources or compute resources. A linked service contains the necessary information to connect to a data store, such as a database, file system, or a web service.

Activities

Activities are the tasks you configure within a pipeline. They can range from data movement (copy activity) to data transformation (data flows) and control activities like loops and conditionals. Each activity performs a specific function, contributing to the overall data processing goal of the pipeline.

Understanding these components in depth is pivotal for designing, deploying, and managing data integration and transformation solutions in Azure. For more detailed insights on Azure Data Factory, consider exploring the official documentation by Microsoft. Also, familiarizing yourself with real-life scenarios and solutions on platforms like GitHub can significantly enhance your practical knowledge and skills.

4. How can you move data in Azure Data Factory?

Moving data is a core functionality in Azure Data Factory (ADF), allowing you to efficiently transfer data between various data stores. Understanding how to leverage ADF for data movement is crucial for any cloud computing professional. This section will guide you through the key methods to optimize data transfer processes within ADF.

Utilizing Copy Activities

At its simplest, data movement in ADF is accomplished through Copy Activities. This component enables you to copy data from a source datastore to a destination datastore, supporting a wide array of data sources and sinks. For a detailed list, Microsoft’s documentation on Supported Data Stores and Formats provides comprehensive insights.

When setting up a Copy Activity, you’ll configure:

- Source dataset: Specifies the data you wish to copy.

- Sink dataset: Defines where the data will be copied to.

- Mapping: Determines how source data fields are mapped to destination fields.

Pipeline Orchestration

Beyond simple copy tasks, ADF allows for complex data movement scenarios through pipeline orchestration. A pipeline in ADF is a logical grouping of activities that can be managed and executed as a single unit. You might orchestrate multiple Copy Activities with data transformation activities, to fulfill complex data integration workflows.

Triggering Mechanisms

To automate data movement, ADF provides different triggering mechanisms:

- Schedule triggers: Run pipelines at specified times.

- Event-based triggers: Initiate pipelines in response to events, such as the arrival of a new file in a storage account.

- Tumbling window triggers: Useful for batch processing scenarios, allowing pipelines to run at fixed intervals.

Advanced Features for Efficient Data Movement

To enhance efficiency, ADF offers features such as:

- Data integration units (DIU): Control the power assigned to a copy operation for faster performance.

- Parallel copying: Enable simultaneous copying of data segments to speed up large data transfers.

Combining these methods and features appropriately will significantly optimize your data movement tasks within Azure Data Factory. As you prepare for your interview, being familiar with these strategies and understanding when to apply them is fundamental.

5. What is the role of Integration Runtimes in Azure Data Factory?

Understanding the role of Integration Runtimes (IR) in Azure Data Factory (ADF) is crucial for tapping into the service’s full potential in cloud computing and data integration tasks. Integration Runtimes are the powerhouse behind ADF, orchestrating the data movement and activity dispatch across different environments.

Integration Runtimes serve as the bridge that facilitates the communication between the Azure Data Factory and various data stores and computing environments. Depending on your specific scenario, there are three types of IR you’ll encounter: Azure, Self-hosted, and Azure-SSIS. Each plays a pivotal role in ensuring that data is efficiently processed and transferred where it needs to go.

- Azure Integration Runtime: Primarily used for data integration scenarios that are cloud-based. It enables data movement between different cloud services within Azure or across other cloud providers.

- Self-hosted Integration Runtime: Essential for scenarios where data and computing sources are on-premises or in a private network. It acts as a mediator, allowing secure data movement between on-premises data stores and ADF without exposing your data to the public internet.

- Azure-SSIS Integration Runtime: Specially designed for executing SQL Server Integration Services (SSIS) packages in Azure. It allows the lift and shift of SSIS workloads into Azure, facilitating cloud-based data integration and transformation projects.

For a detailed understanding of ADF’s architecture and how Integration Runtimes fit into the picture, Microsoft’s official documentation is an invaluable resource. Check out their Azure Data Factory Documentation for in-depth insights.

To summarize, Integration Runtimes in Azure Data Factory are critical for data movement and processing across different environments—cloud, on-premises, and hybrid scenarios. Whether you’re transferring data between cloud services, processing data securely within private networks, or running SSIS packages in Azure, understanding how to leverage IRs effectively will significantly impact your ADF projects’ success. Being well-versed in the types and functionalities of Integration Runtimes is essential for anyone looking to excel in working with Azure Data Factory.

6. Explain the concept of Linked Services in Azure Data Factory.

When diving into Azure Data Factory (ADF), understanding the role of Linked Services is pivotal. Think of Linked Services as the glue that holds the architecture of ADF together, acting as a bridge between ADF and external data sources or compute resources. It’s a foundational concept that enables you to streamline data integration tasks efficiently.

At its core, a Linked Service in ADF is analogous to a connection string in traditional database applications. It contains the necessary information for ADF to connect to external resources, be it data stores like Azure Blob Storage, SQL databases, or even compute services like Azure HDInsight for processing tasks. Essentially, Linked Services define how ADF connects to these resources, specifying parameters such as authentication credentials, the data source location, and other necessary properties.

Here’s a quick rundown of why Linked Services are essential:

- Enable Data Movement: By defining the source and destination of data, Linked Services help the movement and transformation of data across diverse environments.

- Boost Flexibility: With various connectors available, you can easily link to a wide range of data sources and services, enhancing the adaptability of your data integration workflows.

- Simplify Security Management: By encapsulating authentication details, Linked Services contribute to a more secure and manageable approach to accessing external resources.

Consider this example where you’re setting up a Linked Service to an Azure SQL Database:

{

"name": "AzureSqlDatabaseLinkedService",

"properties": {

"type": "AzureSqlDatabase",

"typeProperties": {

"connectionString": {

"value": "Data Source=sql.database.windows.net;Initial Catalog=YourDatabase;User Id=yourUsername;Password=yourPassword;",

"type": "SecureString"

}

}

}

}

This JSON snippet illustrates how you’d configure a Linked Service for Azure SQL Database, encapsulating details like the data source and authentication credentials securely.

For a deeper jump into setting up and managing Linked Services, Microsoft’s official documentation provides comprehensive guidance and is an invaluable resource. Visit Azure Data Factory documentation for detailed insights and examples.

7. How can you monitor and manage Azure Data Factory pipelines?

Monitoring and managing Azure Data Factory (ADF) pipelines are integral to ensuring that your data integration processes run smoothly and efficiently. With ADF’s robust monitoring capabilities, you’re able to keep a close eye on your pipelines, tracking their performance and diagnosing any issues that may arise. Let’s jump into how you can leverage these features to maintain optimal pipeline health.

Use the Azure Monitor

First off, Azure Monitor is your go-to tool for comprehensive monitoring. It collects, analyzes, and acts on telemetry data from various cloud and on-premises environments. Through Azure Monitor, you can gain insights into your ADF pipelines by setting up alerts and analyzing metrics and logs. For more detailed guidance, check out Microsoft’s official documentation on Azure Monitor.

Set Up Alerts and Notifications

Setting up alerts is crucial for staying on top of any issues. ADF allows you to configure alerts based on pipeline runs, activity runs, and trigger runs. These alerts can notify you through email, SMS, or even push notifications when certain conditions are met, such as failures or performance thresholds. This real-time feedback loop enables you to respond quickly to any potential issues.

Leverage ADF’s Monitoring Features

ADF’s built-in monitoring features in the Azure portal provide a user-friendly interface for tracking pipeline runs. Here, you can:

- View activity runs: Check the status, duration, and outcome of each activity within your pipelines.

- Debug pipelines: Use the debug feature to test your pipelines before publishing them. This is invaluable for spotting errors early in the development process.

- Examine triggers: Assess the performance and outcome of triggers that automate your pipeline executions.

Use Azure Data Factory Analytics

For an even deeper analysis, Azure Data Factory Analytics is a powerful service that enables you to collate monitoring data into a single view. By connecting ADF Analytics with Power BI, you can create customized dashboards that provide insights into the efficiency and reliability of your pipelines. Refer to the ADF Analytics template for starting points on setting this up.

8. What is the difference between a pipeline and an activity in Azure Data Factory?

When you’re diving into Azure Data Factory (ADF), understanding the distinction between pipelines and activities is crucial for mastering data integration and workflow orchestration within ADF. This knowledge not only sharpens your skillset but also prepares you for potential interview questions that explore the depth of your understanding.

A pipeline in ADF serves as a high-level logical grouping mechanism for your data movement and data transformation tasks. Think of a pipeline as a manufacturing assembly line where each section of the line represents a step in the process of manufacturing a product. Similarly, a pipeline coordinates the execution of one or more activities, orchestrating tasks like data copy, data transformation, or the execution of a stored procedure, each contributing to the completion of a comprehensive data integration workflow.

On the other hand, an activity represents a single step within a pipeline. Activities are the building blocks of the pipeline, specifying the action to be performed, such as copying data from one location to another, transforming data using Azure Data Lake Analytics or Databricks, or executing a Hive query on HDInsight. There are various types of activities available in ADF, each designed to accomplish a specific task.

Here’s a simple way to visualize the relationship between pipelines and activities:

| Azure Data Factory Component | Description |

|---|---|

| Pipeline | A logical grouping of activities that performs a complete unit of work. |

| Activity | A single task within a pipeline, such as copying data, executing a query, or running a data flow. |

Understanding the pipeline-activity relationship is pivotal for designing effective data integration solutions using Azure Data Factory. For instance, you might design a pipeline that includes a copy activity to move data from an on-premises SQL Server to Azure Blob storage, followed by a data flow activity to transform the data.

For more in-depth insights into designing pipelines and activities in Azure Data Factory, Microsoft’s official documentation offers comprehensive guidance. It provides not only the theoretical underpinning but also practical, hands-on examples.

Remember, pipelines orchestrate the execution of activities; they don’t execute data processing tasks themselves. This distinction is key when planning your ADF projects or preparing for job interviews focused on Azure Data Factory. A solid grasp of these concepts will enhance your ability to design, carry out, and troubleshoot ADF solutions effectively.

9. How can you schedule the execution of Azure Data Factory pipelines?

Scheduling the execution of Azure Data Factory (ADF) pipelines is crucial for automating your data workflows effectively. In ADF, you have several options to schedule pipelines, ensuring your data processing jobs run at the right time without manual intervention.

Trigger-Based Execution

The most common way to schedule ADF pipelines is by using triggers. Triggers in Azure Data Factory can be categorized mainly into two types: Scheduled Triggers and Event-based Triggers.

- Scheduled Triggers allow you to run pipelines on a specified schedule, such as daily, weekly, or at specific intervals. You set this up directly in the Azure portal or by using the ADF SDK. Here’s a basic example to create a scheduled trigger:

{

"name": "DailyTrigger",

"properties": {

"runtimeState": "Started",

"pipelines": [

{

"pipelineReference": {

"referenceName": "SamplePipeline",

"type": "PipelineReference"

},

"parameters": {}

}

],

"type": "ScheduleTrigger",

"recurrence": {

"frequency": "Day",

"interval": 1,

"startTime": "2020-08-01T00:00:00Z",

"timeZone": "UTC"

}

}

}

- Event-based Triggers launch pipelines in response to an event, such as the arrival of a new file in a blob storage. This ensures pipelines execute as soon as the relevant data becomes available.

For more detailed guidance on creating and managing triggers, Microsoft’s official documentation is a great resource.

Tumbling Window Triggers

Tumbling window triggers represent another sophisticated option that allows for backfilling data and handling delayed data arrival scenarios. Unique from scheduled triggers, they do not overlap and ensure each time window is processed once and only once.

Combining these trigger types and configuring them according to your project requirements will ensure that your ADF pipelines run efficiently and reliably. From processing daily sales data to real-time analytics, mastering scheduling in Azure Data Factory enables you to automate complex data workflows with precision.

10. Can you provide an example of using a data flow in Azure Data Factory?

When diving into Azure Data Factory (ADF), one of the powerful components you’ll encounter is the Data Flow. Data flows allow you to develop comprehensive data transformation solutions without writing a single line of code. Whether you’re crunching numbers, transforming data formats, or cleaning up your datasets, data flows in ADF make these tasks intuitive and efficient.

Imagine you’re working with sales data stored in an Azure SQL Database and you need to aggregate this data monthly before moving it to a Data Lake Store for further analysis. Here’s how you could leverage a data flow in ADF to accomplish this:

- Create a Source Dataset: Your first step is to define a source dataset in ADF, which connects to your Azure SQL Database. This dataset acts as the input to your data flow.

- Define the Data Flow: Next, you create a new data flow and add the source dataset as an input. Within the data flow, you can use various transformations. For aggregating sales data monthly, you might use the “Aggregate” transformation, grouping by the month and summing up sales figures.

- Specify a Sink Dataset: The output of your data flow needs a destination, or a sink. In this scenario, it’s the Data Lake Store. Define another dataset in ADF for the Data Lake Store and add it as the sink to your data flow.

- Transform and Load: Within the data flow, you map the aggregated results to the structure expected by the sink dataset. This process includes specifying column names and data types.

- Execute with a Pipeline: Finally, encapsulate the data flow within a pipeline and trigger its execution. You can schedule this pipeline to run monthly, ensuring your Data Lake Store always has the latest aggregated sales data.

For detailed guidance on setting up each component and transformation within a data flow, the Microsoft Documentation on Data Flows is an invaluable resource.

Also, it’s beneficial to reference the Azure Data Factory Pricing page to understand the cost implications of executing data flows within your ADF pipelines. Efficient use of resources in data flows can significantly impact overall costs.

Conclusion

Arming yourself with knowledge about Azure Data Factory’s components, architecture, and advanced features like mapping data flows and optimization techniques is crucial for acing your interview. Understanding Linked Services, scheduling executions with precision, and mastering data flows will not only help you answer technical questions confidently but also demonstrate your capability to handle real-life data integration tasks. Remember, the ability to apply these concepts in practical scenarios can set you apart from other candidates. So, dive deep into the details, practice scenario-based questions, and don’t forget to refer to Microsoft’s official documentation for the most accurate and comprehensive guidance. Your preparation today is the key to revealing exciting opportunities in the world of data integration with Azure Data Factory.